darkfield illumination

data fusion

data integration

data parallelism

data reduction

data structure

DCT

deblur

decentering distortion

decimation

decision tree

decoding

decomposable filters

deconvolution

defocus

defocus blur

deformable model

deformable shape

deformable superquadric

deformable template model

deformation energy

degradation

degree of freedom

Delaunay triangulation

demosaicing

Dempster-Shafer

dense reconstruction

dense stereo matching

densitometry

depth

depth estimation

depth from defocus

depth from focus

depth image

depth image edge detector

depth map

depth of field

depth perception

depth sensor

Deriche edge detector

derivative based search

DFT

diagram analysis

dichroic filter

dichromatic model

diffeomorphism

difference image

difference of Gaussians

differential geometry

differential invariant

differential pulse code modulation

differentiation filtering

diffraction

diffraction grating

diffuse illumination

diffuse reflection

diffusion smoothing

digital camera

digital elevation map

digital geometry

digital image

digital image processing

digital signal processor

digital subtraction angiography

digital terrain map

digital topology

digital watermarking

digitization

dihedral edge

dilate operator

dimensionality

direct least square fitting

directed illumination

directional derivative

discontinuity detection

discontinuity preserving regularization

discontinuous event tracking

discrete cosine transform

discrete Fourier transform

discrete relaxation

discrimination function

disparity

disparity gradient

disparity gradient limit

disparity limit

dispersion

distance function

distance map

distance metric

distance transform

distortion coefficient

distortion polynomial

distortion suppression

dithering

divide and conquer

divisive clustering

DIVX

document analysis

document mosaicing

document retrieval

DoG

dominant planes

Doppler

downhill simplex

DSP

dual image of the absolute conic

duality

dynamic appearance model

dynamic programming

dynamic range

dynamic scene

dynamic stereo

dynamic time warping

darkfield illumination: A specialized illumination technique that uses oblique illumination to enhance contrast in subjects that are not imaged well under normal illumination conditions.

data fusion: See sensor fusion.

data integration: See sensor fusion.

data parallelism: Reference to the parallel structuring of either the input to programs, the organization of programs themselves or the programming language used. Data parallelism is a useful model for much image processing because the same operation can be applied independently and in parallel at all pixels in the image.

data reduction: A general term for processes that 1) reduce the number of data points, e.g., by subsampling or by using cluster centers of mass as representative points or by decimation , or 2) reduce the number of dimensions in each data point, e.g., by projection or principal component analysis (PCA) .

data structure: A fundamental concept in programming: a collection of computer data organized in a precise structure, for instance a tree (see for instance quadtree ), a queue, or a stack. Data structures are accompanied by sets of procedures, or libraries, implementing various types of data manipulation, for instance storage and indexing.

DCT: See discrete cosine transform.

deblur: To remove the effect of a known blurring function on an image. If an observed image

decentering distortion (lens): Lens decentering is a common cause of tangential distortion . It arises when the lens elements are not perfectly aligned and creates an asymmetric component to the distortion.

decimation: 1) In digital signal processing, a filter that keeps one sample out of every

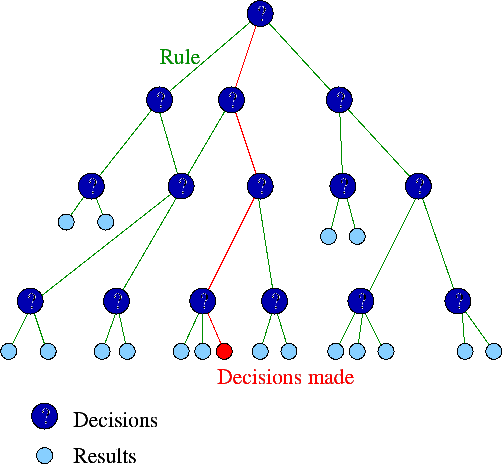

decision tree: Tools for helping to choose between several courses of action. They are an effective structure within which an agent can search options and investigate the possible outcomes. They also help to balance the risks and rewards associated with each possible course of action.

|

decoding: Converting a signal that has been encoded back into its original form (lossless coding) or into a form close to the original (lossy coding). See also image compression .

decomposable filters: A complex filter that can be applied as a number of simpler filters applied one after the other. For example the 2D Laplacian of Gaussian filter can be decomposed into four simpler filters.

deconvolution: The inverse process of convolution. Deconvolution is used to remove certain signals (for example blurring) from images by inverse filtering (see deblur ). For a convolution producing image

defocus: Blurring of an image, either accidental or deliberate, by incorrect focus or viewpoint parameters use or estimation. See also shape from focus , shape from defocus .

defocus blur: Deformation of an image due to the predictable behavior of optics when incorrectly adjusted. The blurring is the result of light rays that, after entering the optical system, misconverge on the imaging plane. If the camera parameters are known in advance, the blurring can be partially corrected.

deformable model: Object descriptors that model a specific class of deformable objects (e.g. eyes, hands) where the shapes vary according to the values of the parameters. If the general, but not specific, characteristics of an object type are known then a deformable model can be constructed and used as a matching template for new data. The degree of deformation needed to match the shape can be used as matching score. See also modal deformable model , geometric deformable model .

deformable shape: See deformable model .

deformable superquadric: A type of superquadric volumetric model that can be deformed by bending, twisting, etc. in order to fit to the data being modeled.

deformable template model: See deformable model .

deformation energy: The metric that must be minimized when determining an active shape model . Comprised of terms for both internal energy (or force) arising from the model shape deformation and external energy (or force) arising from the discrepancy between the model shape and the data.

degradation: A loss of quality suffered by an image, the content of which gets corrupted by unwanted processes. For instance, MPEG compression-decompression can alter some intensities, so that the image is degraded. (See also JPEG image compression, image noise .

degree of freedom: A free variable in a given function. For instance, rotations in 3D space depend on three angles, so that a rotation matrix has nine entries but only three degrees of freedom.

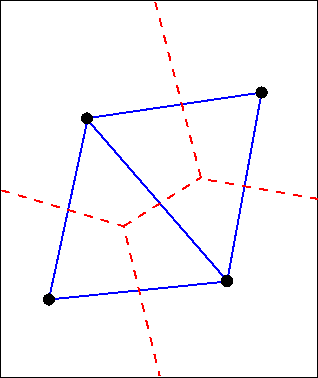

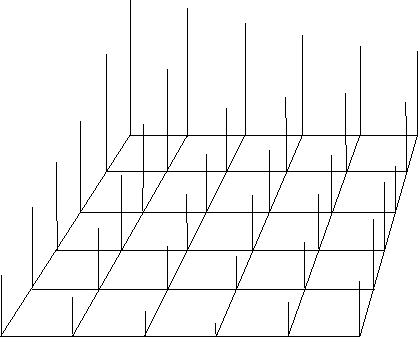

Delaunay triangulation: The Delaunay graph of the point set can be constructed from its Voronoi diagram by connecting the points in adjacent polygons. The connections form the Delaunay triangulation. The triangulation has the property that the circumcircle of every triangle contains no other points. The approach can be used to construct a polyhedral surface approximation from a set of 3D sample points. The solid lines connecting the points below are the Delaunay triangulation and the dashed lines are the boundaries of the Vorinoi diagram.

|

demon: A program that runs in the background, for instance performing checks or guaranteeing the correct functioning of a module of a complex system.

demosaicing: The process of converting a single color per pixel image (as captured by most digital cameras ) into a three color per pixel image.

Dempster-Shafer: A belief modeling approach for testing a hypothesis that allows information, in the form of beliefs, to be combined into a plausibility measure for that hypothesis.

dense reconstruction: A class of techniques estimating depth at each pixel of an input image or sequence, thus generating a dense sampling of the 3D surfaces imaged. This can be achieved, for instance, by range sensing, or stereo vision .

dense stereo matching: A class of methods establishing the correspondence (see stereo correspondence problem ) between all pixels in a stereo pair of images. The generated disparity map can then be used for depth estimation.

densitometry: A class of techniques that estimate the density of a material from images, for instance bone density in the medical domain (bone densitometry).

depth: Distance of scene points from either the camera center or the camera imaging plane. In a range image, the intensity value in the image is a measure of depth.

depth estimation: The process of estimating the distance between a sensor (e.g. a stereo pair) and a part of the scene being imaged. Stereo vision and range sensing are two well-known ways to estimate depth.

depth from defocus: The depth from defocus method uses the direct relationships among the depth, camera parameters and the amount of blurring in images to derive the depths from parameters that can be directly measured.

depth from focus: A method to determine distance to one point by taking many images in better and better focus. This is also called autofocus or software focus.

depth image: See range image .

depth image edge detector: See range image edge detector .

depth map: See range image .

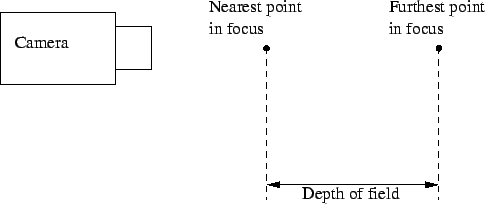

depth of field: The distance between the nearest and the farthest point in focus for a given camera:

|

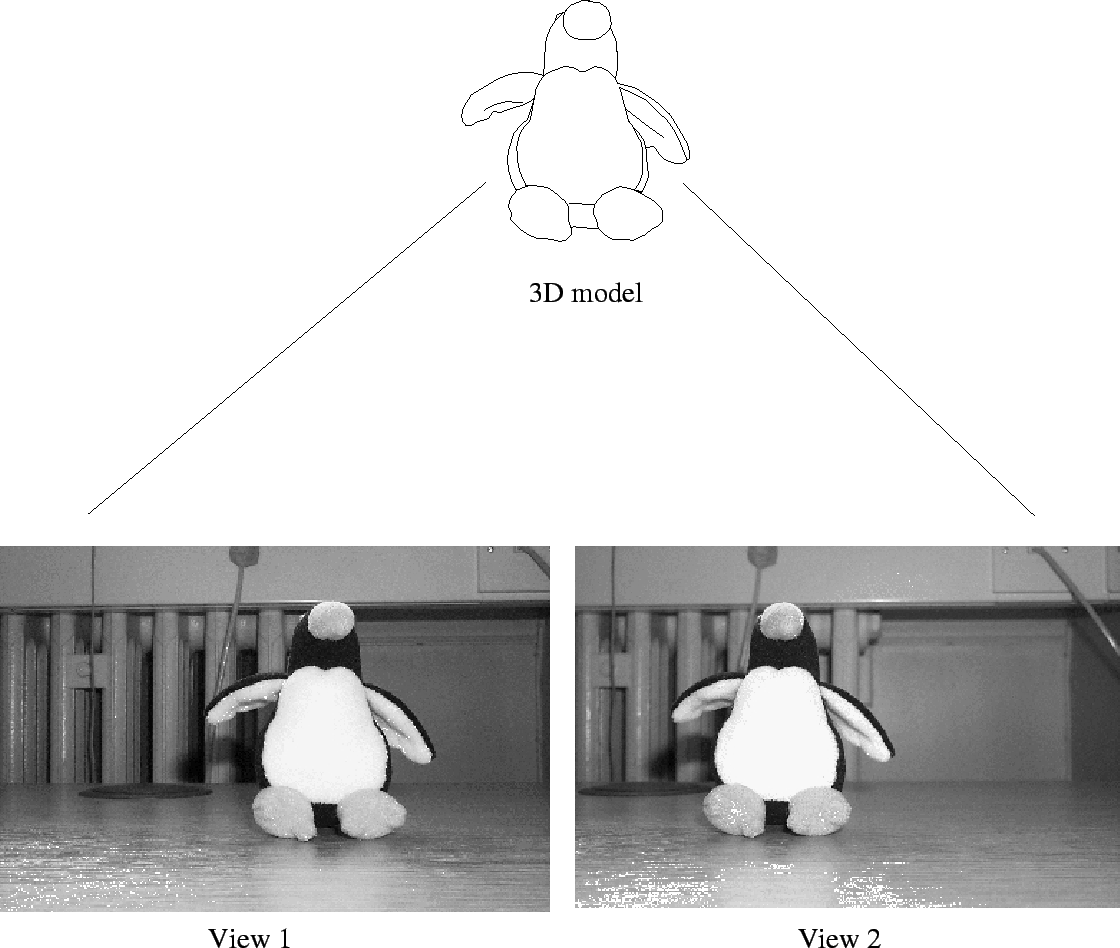

depth perception: The ability to perceive distances from visual stimuli, for instance motion or stereo vision .

|

depth sensor: See range sensor .

Deriche edge detector: Convolution filter for edge finding similar to the Canny edge detector . Deriche uses a different optimal operator where the filter is assumed to have infinite extent. The resulting convolution filter is sharper than the derivative of the Gaussian that Canny uses

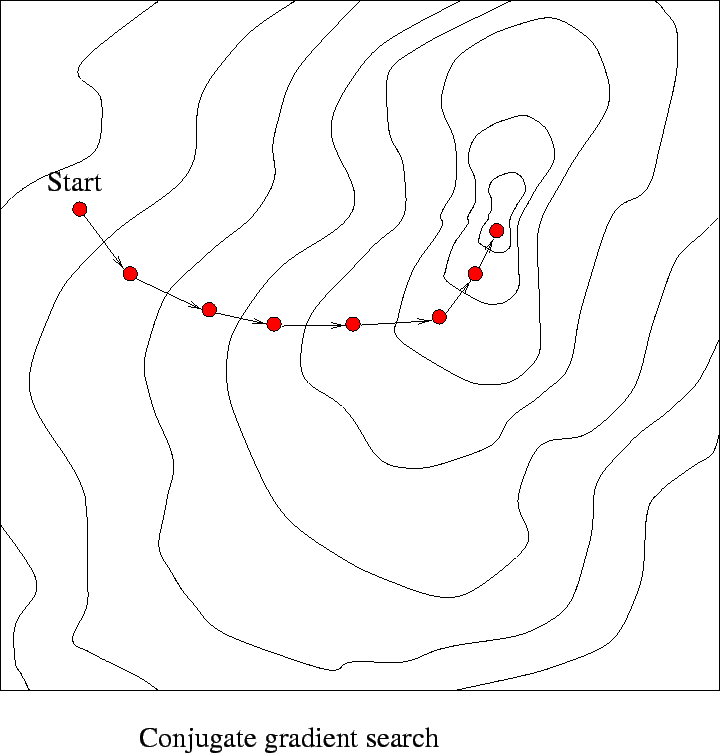

derivative based search: Numerical optimization methods assuming that the gradient can be estimated. An example is the quasi-Newton approach, that attempts to generate an estimate of the inverse Hessian matrix. This is then used to determine the next iteration point.

|

DFT: See discrete Fourier transform .

diagram analysis: Syntactic analysis of images of line drawings, possibly with text in a report or other document. This field is closely related to the analysis of visual languages.

dichroic filter: A dichroic filter selectively transmits light of a given wavelength.

dichromatic model: The dichromatic model states that the light reflected from a surface is the sum of two components, body and interface reflectance. Body reflectance follows Lambert's law. Interface reflectance models highlights. The model has been applied to several computer vision tasks including color constancy , shape recovery and color image segmentation . See also color .

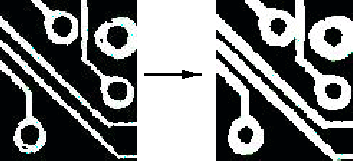

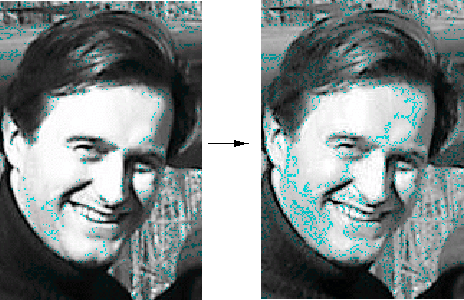

difference image: An image computed as pixelwise difference of two other images, that is, each pixel in the difference image is the difference between the pixels at the same location in the two input images. For example, in the figure below the right image is the difference of the left and middle images (after adding 128 for display purposes).

|

diffeomorphism: A differentiable one-to-one map between manifolds. The map has a differentiable inverse.

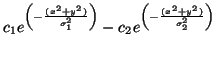

difference-of-Gaussians operator: A convolution operator used to locate edges in a gray-scale image using an approximation to the Laplacian of Gaussian operator. In 2D the convolution mask is:

differential geometry: A field of mathematics studying the local derivative-based properties of curves and surfaces, for instance tangent plane and curvature .

differential invariant: Image descriptors that are invariant under geometric transformations as well as illumination changes. Invariant descriptors are generally classified as global invariants (corresponding to object primitives) and local invariants (typically based on derivatives of the image function). The image function is always assumed to be continuous and differentiable.

differential pulse code modulation: A technique for converting an analogue signal to binary by sampling it, expressing the value of the sampled data modulation in binary and then reducing the bit rate by taking account of the fact that consecutive samples do not change much.

differentiation filtering: See gradient filter .

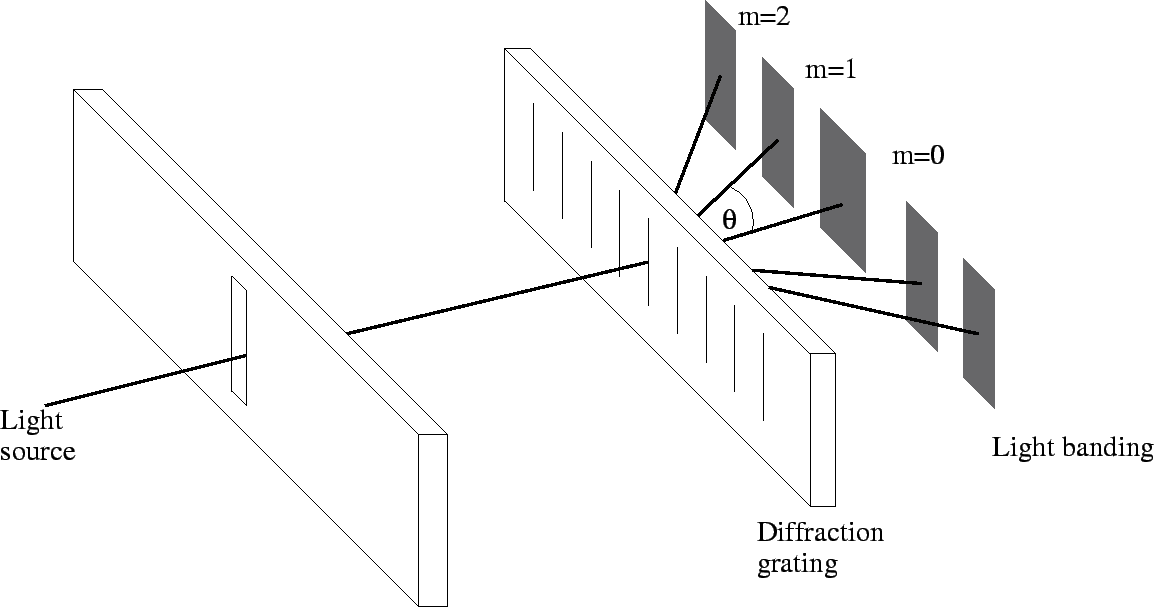

diffraction: The bending of light rays at the edge of an object or through a transparent medium. The amount by which a ray is bent is dependent on wavelength.

diffraction grating: An array of diffracting elements that has the effect of producing periodic alterations in a wave's phase, amplitude or both. The simplest arrangement is an array of slits (see moiré interferometry ).

|

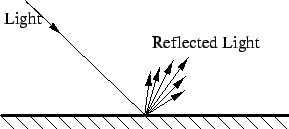

diffuse illumination: Light energy that comes from a multitude of directions, hence not causing significant shading or shadow effects. The opposite of diffuse illumination is directed illumination.

diffuse reflection: Scattering of light by a surface in many directions. Ideal Lambertian diffusion results in the same energy being reflected in every direction regardless of the direction of the incoming light energy.

|

diffusion smoothing: A technique achieving Gaussian smoothing as the solution of a diffusion equation with the image to be filtered as the initial boundary condition. The advantage is that, unlike repeated averaging, diffusion smoothing allows the construction of a continuous scale space .

digital camera: A camera in which the image sensing surface is made up of individual semiconductor sampling elements (typically one per pixel of the image), and quantized versions of the sensed values are recorded when an image is captured .

digital elevation map: A sampled and quantized map where every point represents a height above a reference ground plane (i.e. the elevation).

|

digital geometry: Geometry (points, lines, angles, surfaces, etc.) in a sampled and quantized domain.

digital image: Any sampled and quantized image .

|

digital image processing: Image processing restricted to the domain of digital images .

digital signal processor: A class of co-processors designed to execute processing operations on digitized signals efficiently. A common characteristic is the provision of a fast multiply and accumulate function, e.g., a <- a + b * c.

digital subtraction angiography: A basic technique used in medical image processing to detect, visualize and inspect blood vessels, based on the subtraction of a background image from the target image, usually where the blood vessels are made more visible by using an X-ray contrast medium. See also medical image registration .

digital terrain map: See digital elevation map .

digital topology: Topology (i.e. how things are connected/arranged) in a digital domain (e.g. in a digital image ). See also connectivity .

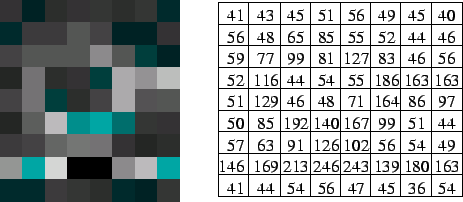

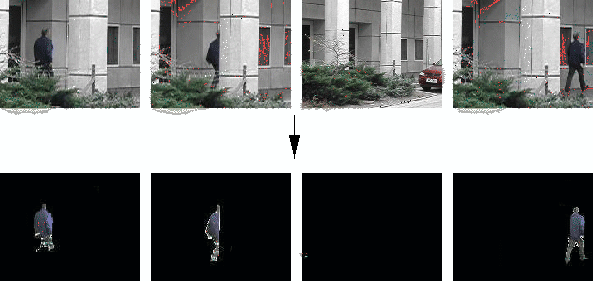

digital watermarking: The process of embedding a signature/watermark into digital data. In the domain of digital images this is most normally done for copyright protection. The digital watermark may be invisible or visible (as shown).

|

digitization: The process of making a sampled digital version of some analog signal (such as an image).

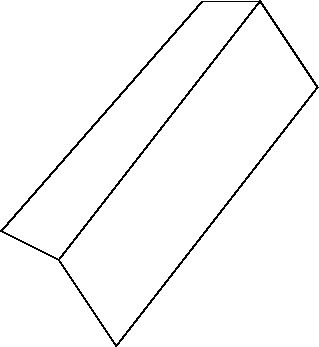

dihedral edge: The edge made by two planar surfaces. A "fold" in a surface:

|

dilate operator: The operation of expanding a binary or gray-scale object with respect to the background . This has the effect of filling in any small holes in the object(s) and joining any object regions that are close together. Most frequently described as a morphological transformation , and is the dual of the erode operator .

|

dimensionality: The number of dimensions that need to be considered. For example 3D object location is often considered as a seven dimensional problem (three dimensions for position, three for orientation and one for the object scale).

direct least square fitting: Direct fitting of a model to some data by a method that has a closed form or globally convergent solution.

directed illumination: Light energy that comes from a particular direction hence causing relatively sharp shadows. The opposite of this form of illumination is diffuse illumination .

directional derivative: A derivative taken in a specific direction, for instance, the component of the gradient along one co-ordinate axis. The images on the right are the vertical and horizontal directiona derivatives of the image on the left.

|

discontinuity detection: See edge detection .

discontinuity preserving regularization: A method for preserving edges (discontinuities) from being blurred as a result of some regularization operation (such as the recovery of a dense disparity map from a sparse set of disparities computed at matching feature points).

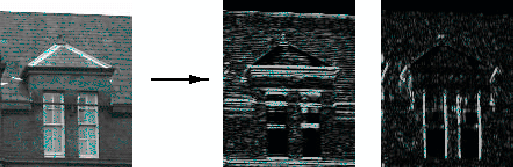

discontinuous event tracking: Tracking of events (such as a moving person) through a sequence of images. The discontinuous nature of the tracking is caused by the distance that a person (or hand, arm, etc.) can travel between frames and also be the possibility of occlusion (or self-occlusion).

|

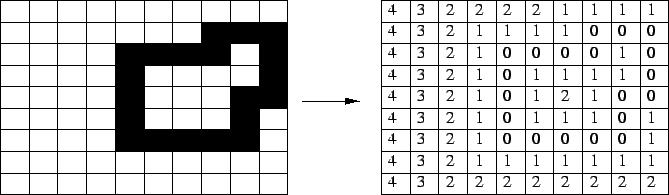

discrete cosine transform (DCT): A transformation that converts digital images into the frequency domain in terms of the coefficients of discrete cosine functions. Used, for example, within JPEG image compression .

discrete Fourier transform (DFT): A version of the Fourier transform for sampled data.

discrete relaxation: A technique for labeling objects in which the possible type of each object is iteratively constrained based on relationships with other objects in the scene. The aim is to obtain a globally consistent interpretation (if possible) from locally consistent relationships.

discrimination function: A binary function separating data into two classes. See classifier .

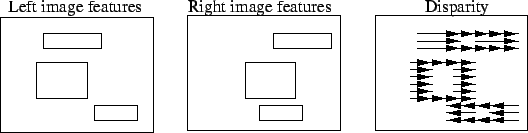

disparity: The image distance shifted between corresponding points in stereo image pairs.

|

disparity gradient: The gradient of a disparity map for a stereo pair, that estimates the surface slope at each image point. See also binocular stereo .

disparity gradient limit: The maximum allowed disparity gradient in a potential stereo feature match.

disparity limit: The maximum allowed disparity in a potential stereo feature match. The notion of a disparity limit is supported by evidence from the human visual system.

dispersion: Scattering of light by the medium through which it is traveling.

distance function: See distance metric .

distance map: See range image .

distance metric: A measure of how far apart two things are in terms of physical distance or similarity. A metric can be other functions besides the standard Euclidean distance , such as the algebraic or Mahalanobis distances. A true metric must satisfy: 1)

distance transform: An image processing operation normally applied to binary images in which every object point is transformed into a value representing the distance from the point to the nearest object boundary. This operation is also referred to as chamfering (see chamfer matching ).

|

distortion coefficient: A coefficient in a given image distortion model, for instance

distortion polynomial: A polynomial model of radial lens distortion. A common example is

distortion suppression: Correction of image distortions (such as non-linearities introduced by a lens). See geometric distortion and geometric transformation .

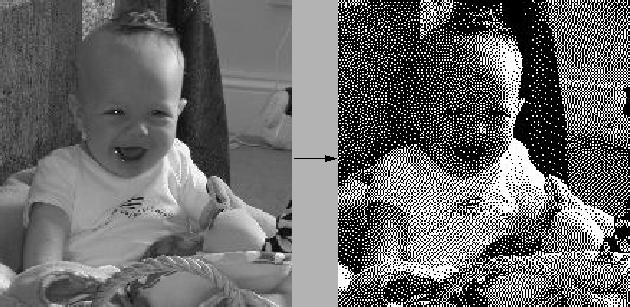

dithering: A technique simulating the appearance of different shades or colors by varying the pattern of black and white (or different color) dots. This is a common task for inkjet printers.

|

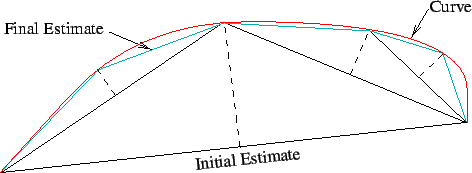

divide and conquer: A technique for solving problems efficiently by subdividing the problem into smaller subproblems, and then recursively solving these subproblems in the expectation that the smaller problems will be easier to solve. An example is an algorithms for deriving a polygonal approximation of a contour in which a straight line estimate is recursively split in the middle (into two segments with the midpoint put exactly on the contour) until the distance between the polygonal representation and the actual contour is below some tolerance.

|

divisive clustering: Clustering/cluster analysis in which all items are initially considered as a single set (cluster) and subsequently divided into component subsets (clusters).

DIVX: An MPEG 4 based video compression technology aiming to achieve sufficiently high compression to enable transfer of digital video contents over the, while Internet maintaining high visual quality.

document analysis: A general term describing operations that attempt to derive information from documents (including for example character recognition and document mosaicing ).

document mosaicing: Image mosaicing of documents.

document retrieval: Identification of a document in a database of scanned documents based on some criteria.

DoG: See difference of Gaussians .

dominant plane: A degenerate case encountered in uncalibrated structure and motion recovery where most or all of the tracked image features are coplanar in the scene.

Doppler: A physics phenomenon whereby an instrument receiving acoustic or electromagnetic waves from a source in relative motion measures an increasing frequency if the source is approaching, and decreasing if receding. The acoustic Doppler effect is employed in sonar sensors to estimate target velocity as well as position.

downhill simplex: A method for finding a local minimum using a simplex (a geometrical figure specified by

DSP: See digital signal processor .

dual of the image of the absolute conic (DIAC): If

duality: The property of two concepts or theories having similar properties that can be applied to the one or to the other. For instance, several relations linking points in a projective space are formally the same as those linking lines in a projective space; such relations are dual.

dynamic appearance model: A model describing the changing appearance of an object/scene over time.

dynamic programming: An approach to numerical optimization in which an optimal solution is searched by keeping several competing partial paths throughout and pruning alternative paths that reach the same point with a suboptimal value.

dynamic range: The ratio of the brightest and darkest values in an image. Most digital images have a dynamic range of around 100:1 but humans can perceive detail in dark regions when the range is even 10,000:1. To allow for this we can create high dynamic range images.

|

dynamic scene: A scene in which some objects move, in contrast to the common assumption in shape from motion that the scene is rigid and only the camera is moving.

dynamic stereo: Stereo vision for a moving observer. This allows shape from motion techniques to be used in addition to the stereo techniques.

dynamic time warping: A technique for matching a sequence of observations (usually one per time sample) to a model sequence of features, where the hope is for a one-to-one match of observations to features. But, because of variations in rate at which observations are produced, some features may get skipped or others matched to more than one observation. The usual goal is to minimize the amount of skipping or multiple samples matched (time warping). Efficient algorithms to solve this problem exist based on the linear ordering of the sequences. See also hidden Markov models (HMM) .