Real-time Fire and Flame Detection in Video*

B. Uğur Töreyin, Yiğithan Dedeoğlu,

Uğur Güdükbay, A. Enis Çetin

Bilkent University, Ankara, Turkey

Conventional

point smoke and fire detectors are widely used in buildings. They typically

detect the presence of certain particles generated by smoke and fire by

ionization or photometry. Alarm is not issued unless particles reach the

sensors to activate them. Therefore, they cannot be used in open spaces and

large covered areas. Video based fire detection systems can be useful to detect

fire in large auditoriums, tunnels, atriums, etc. The strength of using video

in fire detection makes it possible to serve large and open spaces. In

addition, closed circuit television (CCTV) surveillance systems are currently

installed in various public places monitoring indoors and outdoors. Such

systems may gain an early fire detection capability with the use of a fire

detection software processing the outputs of CCTV cameras in real time.

Our aim is to

detect flame, fire and smoke

in real-time by processing the video data generated by an ordinary camera

monitoring a scene.

Detection Algorithm

The proposed

video-based fire detection algorithm consists of four steps:

1. Moving region detection:

Moving pixels or

regions in the current frame of a video are determined by using the hybrid

background estimation method developed by Collins et al.

(1999).

2.

Detection of fire-colored pixels

Color values of

moving pixels are compared with a pre-determined color distribution, which

represents possible fire colors in video in RGB color space.

3. Temporal wavelet analysis

It is observed

that turbulent flames flicker with a characteristic frequency of around 10 Hz

independent of the burning material and the burner (Albers

and Agrawal, 1999; Chamberlin and Rose, 1965). To capture 10 Hz

flicker, the

video should capture at least 20 fps. If the video is available at a lower

capture rate, aliasing occurs but flicker due to flames can still be observed

in the video.

Each pixel’s red

channel value, xn[k,l], is fed to a two stage-filter bank (Fig.1).

This filter bank is composed of half-band high-pass and low-pass filters with

filter coefficients {-0.25, 0.5, -0.25} and {0.25, 0.5, 0.25}, respectively.

The filter bank produces wavelet subsignals dn[k,l] and en[k,l].

If there is high frequency activity at pixel location [k,l], high-band

subsignals d and e get non-zero values (Fig.2). However, in a stationary pixel,

the values of these two subsignals should be equal to zero or very close to

zero because of high-pass filters used in subband analysis (Fig.3).

Fig.1. A two-stage filter bank. HPF and LPF represent half-band high-pass and

low-pass filters, with filter coefficients {-0.25, 0.5, -0.25} and {0.25, 0.5,

0.25}, respectively. This filter bank is used for wavelet analysis.

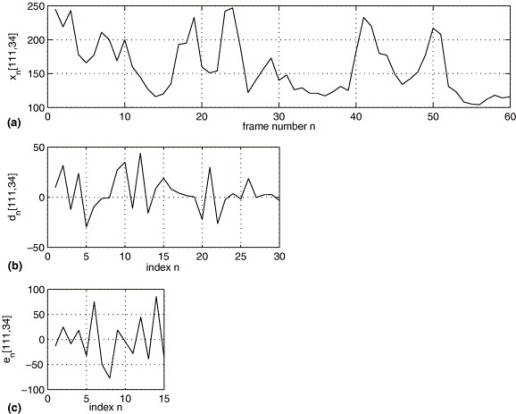

Fig.2. (a) Temporal variation of image pixels xn[111, 34] in time.

The pixel at [111, 34] is part of a flame for image frames xn, n=1,

2, 3, 19, 23, 24, 41 and 50. It becomes part of the background for n = 12,...,

17, 20, 21, 26, 27, 31,..., 39, 45, 52,..., and 60. Wavelet domain subsignals

(b) dn and (c) en reveal the fluctuations of the pixel at

[111, 34].

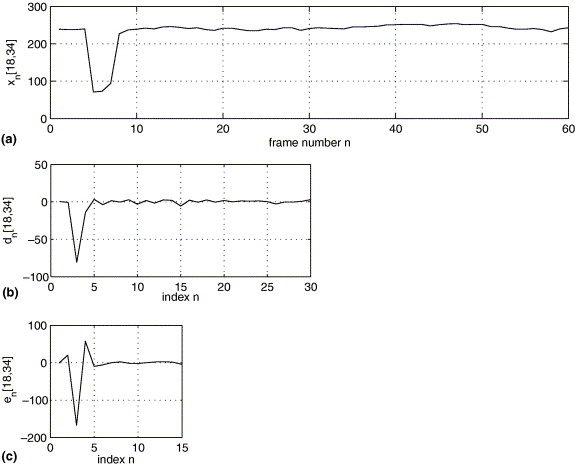

Fig.3. (a) Temporal history of the pixel [18, 34] in time. It is part of a

fire-colored object for n = 4, 5, 6, 7, and 8, and it becomes part of the

background afterwards. Corresponding subsignals (b) dn and (c) en

exhibit stationary behavior for n>8.

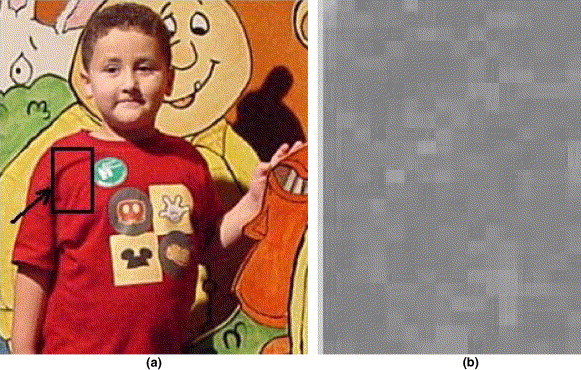

4. Spatial wavelet analysis

In an ordinary fire-colored object there will be

little spatial variations in the moving region as shown in Fig.4(a). On the

other hand, there will be significant spatial variations in a fire region as

shown in Fig.5(a). The spatial wavelet analysis of a rectangular frame

containing the pixels of fire-colored moving regions is performed. The images

in Figs.4(b) and 5(b) are obtained after a single stage two-dimensional wavelet

transform that is implemented in a separable manner using the same filters

explained in the previous section. Absolute values of low-high, high-low and

high-high wavelet subimages are added to obtain these images. A decision

parameter is defined for this step, according to the energy of the wavelet

subimages.

Fig.4. (a) A child with a fire-colored t-shirt, and (b) the

absolute sum of spatial wavelet transform coefficients, |xlh[k,l]| +

|xhl[k,l]|+|xhh[k,l]|, of the region bounded by the

indicated rectangle.

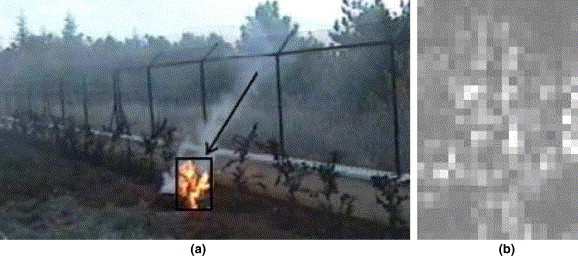

Fig.5. (a) Fire, and (b) the absolute sum of spatial wavelet

transform coefficients, |xlh[k,l]| + |xhl[k,l]| + |xhh[k,l]|,

of the region bounded by the indicated rectangle.

References

- R. T.

Collins, A. J. Lipton, and T. Kanade, ``A System for Video

Surveillance and Monitoring,'' in the Proc. of American Nuclear Society

(ANS) Eighth International Topical Meeting on Robotics and Remote Systems,

Pittsburgh, PA, 1999.

- B. W.

Albers and A. K. Agrawal, ``Schlieren Analysis of an Oscillating

Gas-jet Diffusion,'' Combustion and Flame, Vol. 119, pp. 84-94, 1999.

- D. S.

Chamberlin and A. Rose, The First Symposium International on

Combustion, The Combustion Institute, Pittsburgh, pp. 27-32, 1965.