The pig behavior dataset consisting of 23 days (over 6 weeks) of daytime pig video captured from a nearly overhead camera. Registered color and depth videos are captured. The data was captured at 6 frames per second, and stored in batches of 1800 frames (5 minutes). Most frames show 8 pigs.

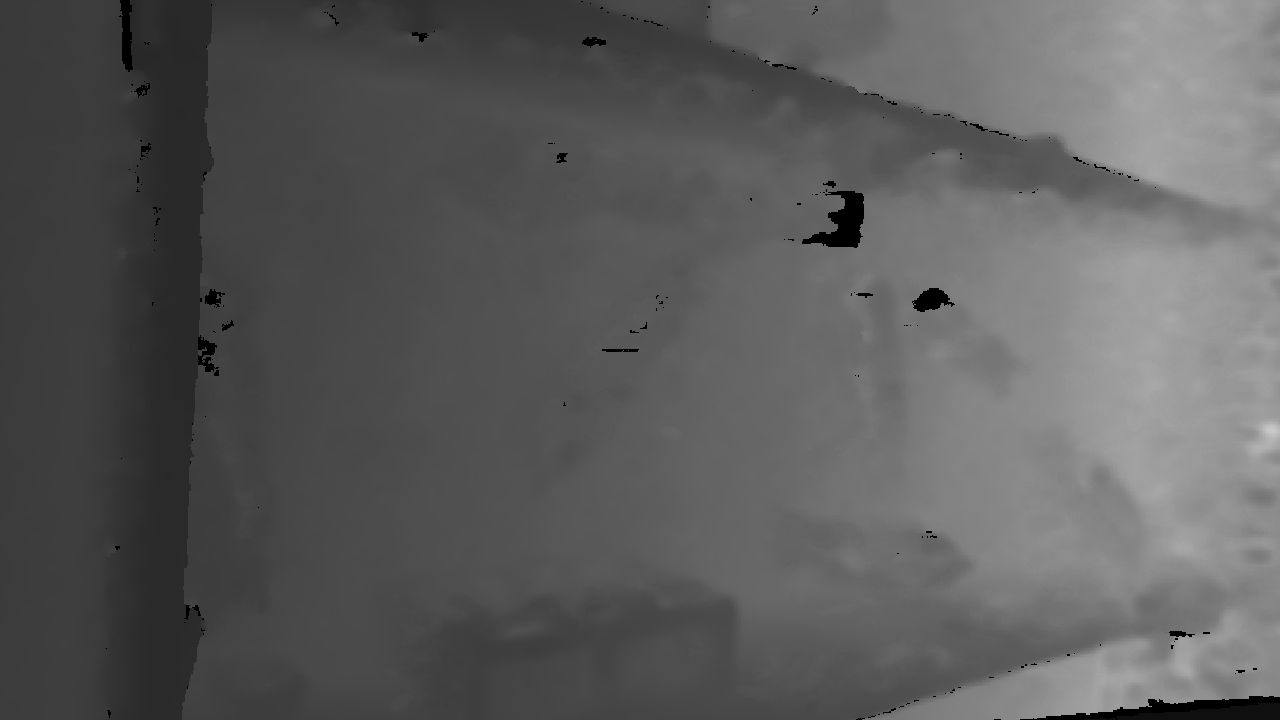

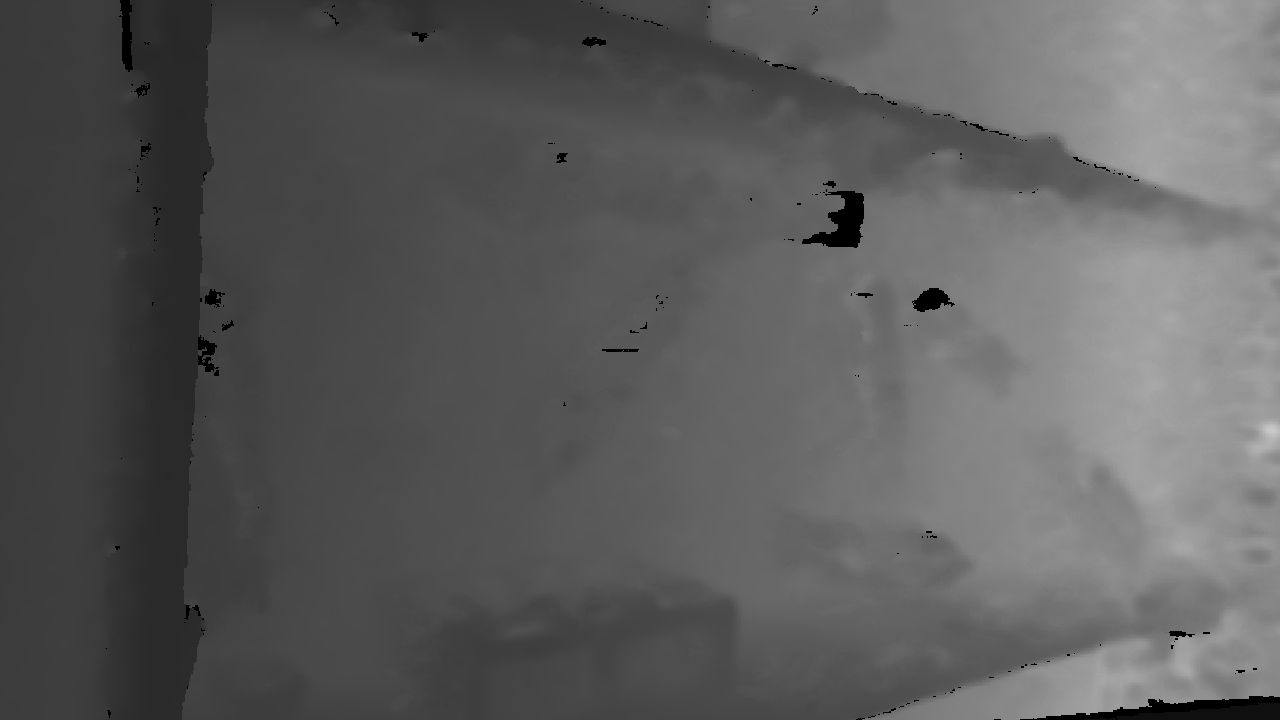

An example of the color and depth images can be seen here:

|

|

The feeder is visible at the bottom center, and the two water sources are at the bottom left (visible) and bottom right (not visible behind the pig in this image) of the pen. Altogether, there are approximately 3,429,000 data frames captured.

The dataset was collected between 5 Nov and 11 Dec (2019, 6 weeks) in a single pigpen (5.8m x 1.9m) with 8 growing pigs at the SRUC (Scotland's Rural College) research pig unit (near Edinburgh, UK). The pigs were mixed intact males and females weighing around 30kg at the start of the study. They were given a 3-space feeder with ad libitum commercial pig feed, two nipple water drinkers and a plastic enrichment device (Porcichew, East Riding Farm Services Ltd, Yorkshire, UK) suspended at pig height from a chain (see yellow object at top center). Pigs were also given straw and shredded paper on a part slatted floor. Color image and depth data was collected using an Intel RealSense D435i camera positioned at 2.5 meters from the ground. Both RGB and depth information were acquired at 6fps with a resolution of 1280×720, and the acquisition was limited to daytime (from 7AM to 7PM), due to the absence of artificial light during nighttime.

Twelve of the sequences have been manually ground-truthed, with axis-aligned bounding boxes, a persistent tracking identifier, and a behavior label. The labels are applied to every third image, so there are 600 labeled frames in each sequence, for a total of 7200 labeled frames, each with 8 labeled pigs.

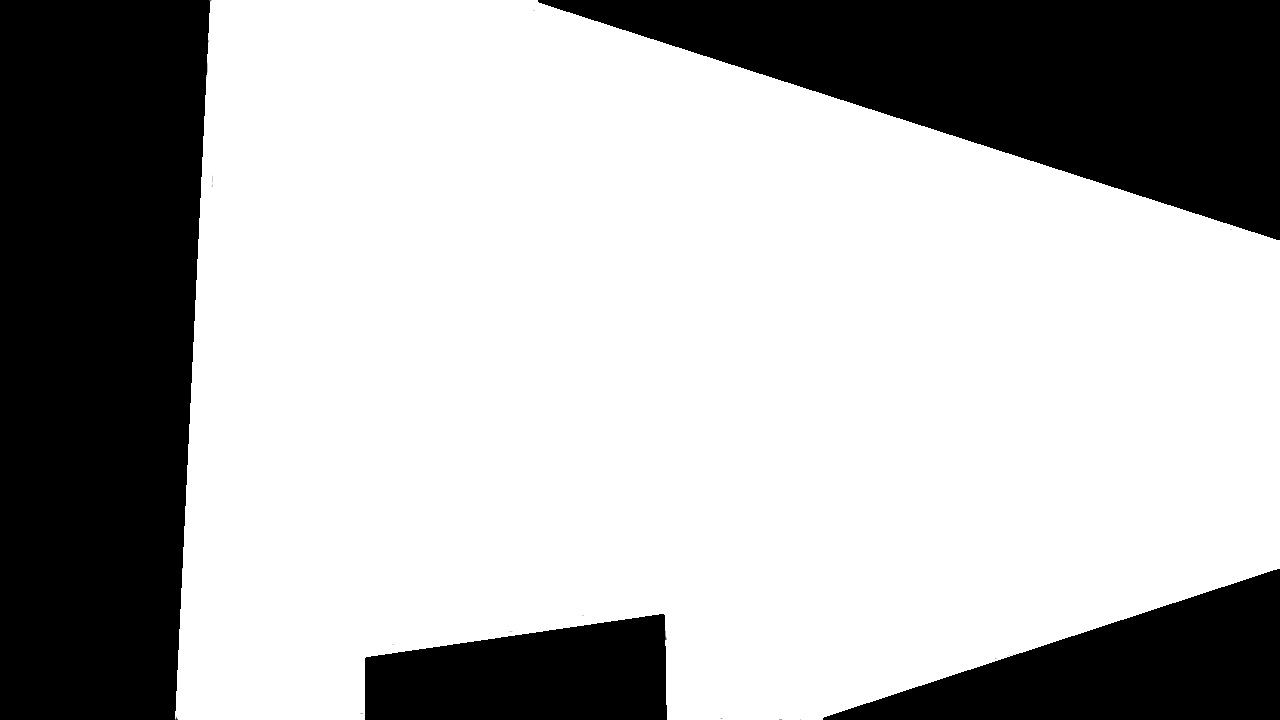

An example of the ground-truth bounding boxes can be seen here, along with the mask.jpg mask file that indicates the usable pen area:

|

|

The ground truth files are in annotated.tar (3.2 Gb), which consists of one folder for each sequence. All sequences are associated with a folder called a 'CLIP'. The clips that are ground-truthed are:

| DATE | CLIPS |

|---|---|

| 5/11/2019 | 000002, 000009 |

| 11/11/2019 | 000016,000028,000036 |

| 15/11/2019 | 000033 |

| 22/11/2019 | 000010 |

| 28/11/2019 | 000113 |

| 2/12/2019 | 000005,000208 |

| 10/12/2019 | 000060, 000078 |

Each CLIP folder contains:

There are 25 files, for 23 collection days. 23 of the files are zip files, the other 2 are tar files. They can be downloaded from:

| DATE | FILE | SIZE (Gb) | CLIPS | CLIP NUMS |

|---|---|---|---|---|

| 5/11/19 | pigs051119.zip | 13.3 | 48 | 0-64 |

| 6/11/19 | pigs061119.zip | 25.8 | 107 | 71-177 |

| 7/11/19 | pigs071119.zip | 23.1 | 108 | 184-291 |

| 8/11/19 | pigs081119.zip | 24.5 | 108 | 297-404 |

| 9/11/19 | pigs091119.zip | 8.5 | 48 | 410-457 |

| 11/11/19 | 2019-11-11.tar | 9.0 | 38 | 0-37 |

| 15/11/19 | pigs151119.zip | 16.5 | 66 | 0-66 |

| 16/11/19 | pigs161119.zip | 2.6 | 10 | 67-76 |

| 22/11/19 | 2019-11-22.tar | 5.8 | 25 | 0-24 |

| 27/11/19 | pigs271119.zip | 21.4 | 85 | 0-84 |

| 28/11/19 | pigs281119.zip | 28.4 | 114 | 85-199 |

| 29/11/19 | pigs291119.zip | 30.9 | 115 | 200-314 |

| 30/11/19 | pigs301119.zip | 28.5 | 115 | 315-429 |

| 1/12/19 | pigs011219.zip | 29.1 | 115 | 430-544 |

| 2a/12/19 | pigs021219a.zip | 9.9 | 41 | 545-585 |

| 2b/12/19 | pigs021219b.zip | 16.4 | 63 | 0-63 |

| 3/12/19 | pigs031219.zip | 29.1 | 115 | 64-178 |

| 4/12/19 | pigs041219.zip | 28.7 | 114 | 179-293 |

| 5/12/19 | pigs051219.zip | 9.4 | 48 | 294-341 |

| 7/12/19 | pigs071219.zip | 7.3 | 29 | 0-28 |

| 8/12/19 | pigs081219.zip | 27.7 | 116 | 29-144 |

| 9/12/19 | pigs091219.zip | 28.0 | 115 | 145-259 |

| 10a/12/19 | pigs101219a.zip | 9.4 | 38 | 260-297 |

| 10b/12/19 | pigs101219b.zip | 16.8 | 71 | 0-71 |

| 11/12/19 | pigs111219.zip | 9.0 | 39 | 72-110 |

When uncompressed (tar or zip) there is one folder for each video clip. Each day file contain from 10 to 116 video clips in separate subfolders, numbered 000000, 000001, and so on. There may be a few missing subfolders in a sequence. Each subfolder contains 1 video clip of 5 minutes length, with 1800 video frames, along with the other files described below:

Bounding boxes and behaviors have been automatically computed for all pigs in all frames. This data can be downloaded from the file results_dataset.tar.gz (4.1 Gb). These results were computed based on the algorithms described in the paper: L. Bergamini, S. Pini, A. Simoni, R. Vezzani, S. Calderara, R. B. D'Eath, R. B. Fisher; Extracting Accurate Long-Term Behavior Changes from a Large Pig Dataset, Proc. Int. Conf. on Computer Vision Theory and Applications (VISAPP 2021), online 8-10 February 2021. where the results computed from this dataset were described. No guarantee is given about the correctness of the results.

When unpacked, this file consists of a number of date and clip folders and subfolders. The results for the days listed above can be found below. The clip numbers here match the clip numbers in the raw data.

| DATE | FOLDER | SUB-FOLDERS |

|---|---|---|

| 5/11/19 | 2019-11-05--12:27:11 | 0-64 |

| 6/11/19 | 2019-11-05--12:27:11 | 71-177 |

| 7/11/19 | 2019-11-05--12:27:11 | 184-291 |

| 8/11/19 | 2019-11-05--12:27:11 | 297-404 |

| 9/11/19 | 2019-11-05--12:27:11 | 410-457 |

| 11/11/19 | 2019-11-11--11:34:50 | 0-37 |

| 15/11/19 | 2019-11-15--12:26:27 | 0-66 |

| 16/11/19 | 2019-11-15--12:26:27 | 67-76 |

| 22/11/19 | 2019-11-22--11:20:15 | 0-24 |

| 27/11/19 | 2019-11-27--10:24:59 | 0-84 |

| 28/11/19 | 2019-11-27--10:24:59 | 85-199 |

| 29/11/19 | 2019-11-27--10:24:59 | 200-314 |

| 30/11/19 | 2019-11-27--10:24:59 | 315-429 |

| 1/12/19 | 2019-11-27--10:24:59 | 430-544 |

| 2a/12/19 | 2019-11-27--10:24:59 | 545-585 |

| 2b/12/19 | 2019-12-02--12:45:38 | 0-63 |

| 3/12/19 | 2019-12-02--12:45:38 | 64-178 |

| 4/12/19 | 2019-12-02--12:45:38 | 179-293 |

| 5/12/19 | 2019-12-02--12:45:38 | 294-341 |

| 7/12/19 | 2019-12-07--16:44:18 | 0-28 |

| 8/12/19 | 2019-12-07--16:44:18 | 29-144 |

| 9/12/19 | 2019-12-07--16:44:18 | 145-259 |

| 10a/12/19 | 2019-12-07--16:44:18 | 260-297 |

| 10b/12/19 | 2019-12-10--11:52:53 | 0-71 |

| 11/12/19 | 2019-12-10--11:52:53 | 72-110 |

Each clip folder contains 4 python files:

The description of the contents of each file is described below in section Format of automatically detected pigs files.

The main work was done by the authors of this paper:

L. Bergamini, S. Pini, A. Simoni, R. Vezzani, S. Calderara, R. B. D'Eath, R. B. Fisher; Extracting Accurate Long-Term Behavior Changes from a Large Pig Dataset, Proc. Int. Conf. on Computer Vision Theory and Applications (VISAPP 2021), online 8-10 February 2021.which should be cited if the pig data is used in published research. Our thanks go to SRUC technician Mhairi Jack, and farm staff Peter Finnie and Phil O’Neill. SRUC’s contribution to this work was funded by the Rural and Environment Science and Analytical Services Division of the Scottish Government. Ethical approval was obtained for the pig experiments.

Email: Prof. Robert Fisher at rbf -a-t- inf.ed.ac.uk.

School of Informatics, Univ. of Edinburgh

The ground-truth and detection JSON file format consists of a header, and a descriptor for each detected and tracked pig in each frames. NOTE 1: the ground truth only labels up to 600 frames (every third frame) so the frame counter only goes to 600 and ground truth frame f corresponds to raw video frame 3*f. NOTE 2: a new descriptor is added only when the description changes, so some ground-truth frames might not have a descriptor in every frame for some pig, e.g. if it is sleeping without moving. Bounding boxes are interpolated. Behaviors are forward propagated (e.g. frames 2,3,4 will have the same behavior as 1).

The header and wrapper structure consists of:

{"videoFileName":"color.mp4","fullVideoFilePath":"2019-11-27--10:24:59/000113/","stepSize":0.1,"config":

{"stepSize":0.1,"playbackRate":0.4,"imageMimeType":"image/jpeg","imageExtension":".jpg","framesZipFilename":"extracted-frames.zip","consoleLog":false},

"objects":[

FRAMELIST

...

FRAMELIST

]}

There is one FRAMELIST for each tracked pig. In the case of the ground truth, this means 8 pigs. In the case of real data, there may be more or less than 8 depending on the number of pigs in the pen (usually 8 but may be fewer) and the success in tracking (which might break and start a new track). Below, "PIGID" is the persistent identifier of the pig in the FRAMEDATA, in the range 0, 1, ...

{"frames":[

FRAMEDATA

...

FRAMEDATA

],"id":"PIGID"}

There is one entry for each detected pig:

{"frameNumber":FNUM,"bbox":{"x":XXX,"y":YYY,"width":WWW,"height":HHH},"isGroundTruth":true,"visible":true,"behaviour":"BBB"},

This section describes the format of the automatically detected pigs files associated with each video clip. Each clip folder contains 4 python files:

As the same detection and tracking information is in both tracklets.npy and behaviour_F.npy, you only need to use one of the three behaviour_F.npy files and can ignore tracklets.npy.

You can load the file and inspect its content with the following code. You need Python 3.

import numpy as np

track = np.load('FILE-PATH/tracklets.npy', allow_pickle=True) # 4 element array

track[0] --> frame rate (FPS) while generating tracklets of a single video

track[1] --> mean tracklet length

track[2] --> video output resolution (should be always 1280x720 pixels)

track[3] --> N-dimensional (N = number of tracklets) list. Each list entry contains an M-dimensional (M = tracklet length) array representing a single tracklet.

Each entry in the tracklet array has 6 values [frameID, bbox_X_min, bbox_Y_min, bbox_X_max, bbox_Y_max, tracklet_state],

"tracklet_state" can have 3 values: 0 = new tracklet, 1 = updated tracklet, 2 = wrong tracklet or no available detection

You can load the file and inspect its content with the following code. You need Python 3. F = 15,20,25 is the number of frames taken into account in the temporal analysis. The minimum movement threshold is fixed to 25mm per frame for the moving behaviour and 110mm for the running behaviour.

import numpy as np

track = np.load('FILE-PATH/behaviour_F.npy', allow_pickle=True) # N-dimensional array (N = number of tracklets)

track[i] --> array with Mx14 dimension (M = tracklet length) where the 14 values are in order (for track i and frame m of the track):

track[i][m][0] --> frameID

track[i][m][1] --> bbox_X_min

track[i][m][2] --> bbox_Y_min

track[i][m][3] --> bbox_X_max

track[i][m][4] --> bbox_Y_max

track[i][m][5] --> tracklet_state: 0 = new tracklet, 1 = updated tracklet, 2 = wrong tracklet or no available detection

track[i][m][6] --> temporal_analysis, i.e. mean absolute difference in terms of bounding box center pixel position with regard to the previous F (15, 20 or 25) frames

track[i][m][7] --> theta, i.e. angle of orientation of the pig

track[i][m][8] --> center_of_mass_X

track[i][m][9] --> center_of_mass_Y

track[i][m][10] --> length of the pig computed with moments

track[i][m][11] --> width of the pig computed with moments

track[i][m][12] --> zone in the pen where the center of mass of the pig is seen (0 = other, 1 = feeder, 2 = water bottom left, 3 = water bottom right, 4 = playing with yellow toy)

track[i][m][13] --> behavior code (0 = unknown, 1 = not moving, 2 = moving, 3 = running, 4 = eating, 5 = drinking, 6 = playing, 101 = standing, 102 = lying)