Next:NeurocalibrationUp:Zoom-lens

Camera CalibrationPrevious:Introduction

Camera Calibration Problem

The result of camera calibration is an explicit transformation that maps

a 3D world point M=(X,Y,Z,1)T into a 2D pixel

m=(u,v,1)T.

This mapping can be represented by a 3 x 4 projection matrix, P,

that encompasses 11 physical parameters: rotation angles Rx,

Ry and Rz, translations tx, ty

and tz, the coordinates of the principal point (u0,v0),

two scale factors  and

and  ,

and the skewness c between the image axes. This camera model thus ignores

lens distortion which is often accounted for in the camera model by adding

some distortion parameters [12].

However, these parameters can be estimated in the captured images by a

pre-calibration process [9],[6].

Then the images (or image features) can be undistorted before calibration

proceeds. The decoupling between distortion parameters from the others

will allow us to maintain the simple relation of the distortion-free model

thus making subsequent vision tasks (e.g., stereo reconstruction) easier.

Moreover, the decoupling would reduce the effect of the correlation between

lens distortion coefficients and other camera model parameters [8]

on parameter estimation.

,

and the skewness c between the image axes. This camera model thus ignores

lens distortion which is often accounted for in the camera model by adding

some distortion parameters [12].

However, these parameters can be estimated in the captured images by a

pre-calibration process [9],[6].

Then the images (or image features) can be undistorted before calibration

proceeds. The decoupling between distortion parameters from the others

will allow us to maintain the simple relation of the distortion-free model

thus making subsequent vision tasks (e.g., stereo reconstruction) easier.

Moreover, the decoupling would reduce the effect of the correlation between

lens distortion coefficients and other camera model parameters [8]

on parameter estimation.

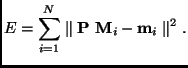

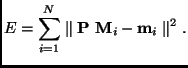

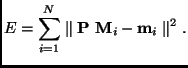

Given a sufficient number, N, of reference world points, Mi,

as well as their corresponding pixel positions, mi,

the camera calibration problem is to estimate the 11 camera parameters

or the projection matrix P, that minimize

|

(1) |

However, since the camera calibration parameters may vary as the lens

setting is changed, the calibration problem of a zoom-lens camera system

becomes finding the intrinsic and extrinsic camera parameters, expressed

as functions of the controllable camera settings, which can be composed

for any fixed camera setting in order to obtain the projection matrix.

Next:NeurocalibrationUp:Zoom-lens

Camera CalibrationPrevious:Introduction

Moumen T. Ahmed 2001-06-27