Next: Bibliography

Hand Tracking

Jamie Sherrah and Shaogang Gong

Tracking human body parts and motion is a challenging but essential

task for modelling, recognition and interpretation of human behaviour.

In particular, tracking of at least the head and hands is required for

gesture recognition in human-computer interface applications such as

sign-language recognition. Existing methods for markerless tracking

can be categorised according to the measurements and models

used [5]. In terms of measurements, tracking

usually relies on intensity information such as

edges [6,2,13,4],

skin colour and/or motion

segmentation [19,10,7,12],

or a combination of these with other cues including

depth [9,19,14,1].

The choice of model depends on the application of the tracker. If the

tracker output is to be used for some recognition process then a 2D

model of the body will

suffice [12,7]. On the other hand, a

3D model of the body may be required for generative purposes, to drive

an avatar for example, in which case skeletal constraints can be

exploited [19,14,4],

or deformable 3D models can be matched to 2D

images [6,13].

When tracking multiple overlapping objects such as human head and

hands under real-world conditions, three main problems are

encountered: ambiguity, occlusion and motion discontinuity.

Ambiguities arise due to distracting noise, mis-matching of the

tracked objects, and the possibility of occlusion. If the objects are

part of the same articulated entity, such as the human body, domain

knowledge can be used to resolve some of the ambiguities. A common

and robust approach for real-time tracking is to combine multiple

visual cues [17,18]. However, in

the domain of cues such as skin colour and motion, occlusion still

presents a problem because body parts such as hands can become

virtually indistinguishable. Therefore joint tracking of the body

parts must be performed with an exclusion principle on observations

[15,11]. Often depth

information and temporal dynamic models are exploited to overcome the

occlusion problem, for example

[19,10]. However depth

information requires multiple cameras, and introduces its own problems

of calibration and inaccuracy. Further, spatio-temporal continuity

cannot always be assumed as the basis for tracking. Often body motion

may appear discontinuous since the hands can move quickly and

seemingly erratically, or undergo occlusion by other body parts.

Therefore methods such as Kalman filtering that are strongly reliant

upon well-defined dynamics and temporal continuity are generally

inadequate. On the other hand, a wide range of domain knowledge beyond the

visual input is typically utilised by a human observer to reduce

reliance on spatio-temporal consistency.

The problem of tracking a person's head and hands in 2D from a single

camera view is addressed in [16]. To deal with

noise and ambiguity problems, a view-based data fusion approach is

adopted. Inexpensive visual cues, namely motion, skin colour and

coarse intensity-based orientation measures are extracted from a

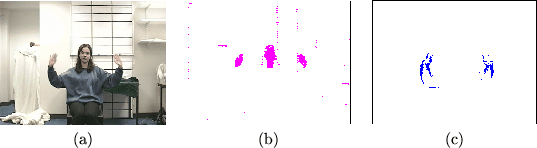

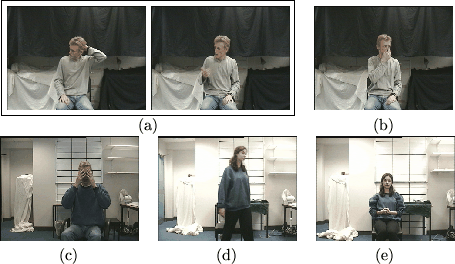

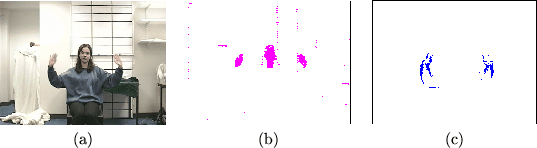

near-frontal view of a subject. Examples of these cues are shown in

Figure 1. Skin colour and motion are natural cues for

focusing attention and computational resources on salient regions in

the image. The hand orientation information is used to disambiguate

the hands when they cross over in the image. Note that although

distracting noise and background clusters appear in the skin image,

these can be eliminated at a low level by ``AND''ing directly with

motion information. However, fusion of these cues at this low level

of processing is premature and causes loss of information. For

example, the motion information generally occurs only at the edges of

the moving object, making the fused information too sparse. In this

approach the cues are fused at a higher level using a Bayesian Belief

Network.

Figure 1:

Example of visual cues measured from video stream: (a) original image; (b) binary skin colour image; and (c) binary motion image.

|

Under the assumption that the subject is approximately facing the

camera, the head skin cluster can be tracked reliably using the mean

shift algorithm dispite occlusion by the hands [3]. A

sequential connected components algorithm [8] can then

applied to a sub-sampled image to obtain a list of skin-coloured pixel

clusters in the image. Under the assumption that the head and hands

form the largest moving connected skin coloured regions in the image,

tracking the head and hands reduces to matching the previously tracked

body parts to the skin clusters in the current frame. However, skin

clusters can be indistinguishable, and only discontinuous information

is available as though a strobe light were operating, creating a

``jerky'' effect.

Moving body parts are not merely independent dynamic objects in

isolation. They correlate closely for a functional purpose,

especially when they are used to express certain semantic expressions

or behaviour. Let us now examine more closely the nature of the

underlying knowledge when we try to successfully associate and track

interacting body parts.

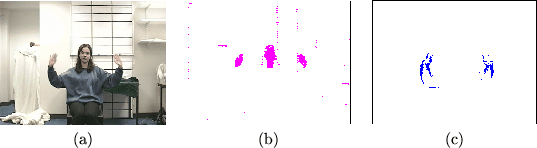

For instance, we know that at any given time a hand is either:

- 1.

- associated with a skin colour cluster,

or

- 2.

- it occludes the face (and is therefore ``invisible'' using only

skin colour) as in Figures 2(b)

and (c), or

- 3.

- it has disappeared from the

image as in Figure 2(d).

When considering both hands, the possibility arises that both hands

are associated with the same skin colour cluster, as when one clasps

the hands together for example, shown in

Figure 2(e).

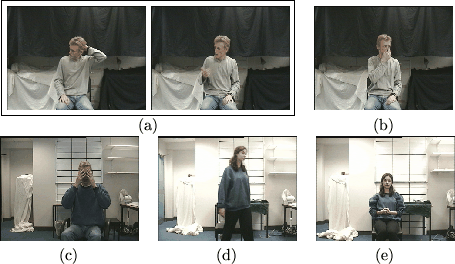

Figure 2:

Examples of the difficulties associated with tracking the body: (a) motion is discontinuous between frames; (b) one hand occludes the face; (c) both hands occlude the face; (d) a hand is invisible in the image; and (e) the hands occlude each other.

|

We assume that only the two largest skin clusters other than the head

can potentially be hands. Note that one body part can only correspond

to one cluster, but a cluster may correspond to one, two or three of

the body parts. Some examples of possible scenarios are:

- 1.

- Three clusters observed: One corresponds to the head, one to the left hand, and one to the right.

- 2.

- Two clusters observed: One corresponds to the head, the other to clasped hands.

- 3.

- One cluster observed: Both hands occlude the face, or are missing from the screen.

At first glance the situation seems trivial, however there are many

other possibilities. In scenario 1, one of the clusters may

be a distracting background object, in which case the remaining

non-head cluster may correspond to one hand, or both hands. In

scenario 2, one hand may occlude the face and the other

correspond to the non-head cluster, or the cluster may not be a hand

at all.

Given such contextual knowledge, the task is to determine which of the

skin-coloured clusters corresponds to each body part.

In [16], a discrete Bayesian network was used

to probabilistically consider these possibilities and infer the most

likely associative configuration.

Next: Bibliography

Shaogang Gong

2001-05-29