Dynamic Face Recognition Using Identity Surfaces

Yongmin Li,

Shaogang

Gong and Heather Liddell

Department of Computer Science

Queen Mary, University of London

1. Introduction

Recognising faces with large pose variation is a

challenging problem owing to the severe nonlinearity caused by

rotation in depth, self-shading and self-occlusion. The traditional

techniques like probability estimation or template matching usually

cannot solve this problem satisfactorily. However, it can be solved

more efficiently if the pose information is explicitly used. Based on

this idea, we developed a method of multi-view face recognition using

identity surfaces. The basic idea of the identity surfaces is similar

to the parametric eigenspace method presented by Murase and Nayar [4,5].

2. Identity Surfaces

An identity surface of a face class (all

face patterns belonging to one subject) is referred to as a unique

hyper surface in a feature space. If we only focus on the variation

of facial appearance from pose change, the feature space can be

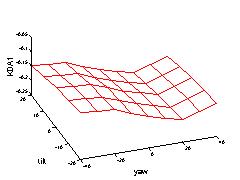

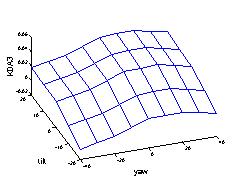

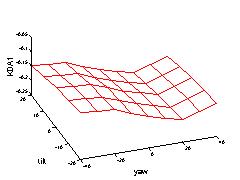

constructed as a pose parameterised feature space. As shown in Figure 1, the two basis coordinates

stand for the head pose: tilt and yaw, and the other coordinates are

used to represent the discriminating features. For each pair of tilt

and yaw, there is one unique "point" for a face class. The

distribution of all these "points" of a same face class forms a

hyper surface in this feature space. We call this surface an identity

surface.

|

Figure 1: Identity surfaces for dynamic

face recognition.

Using identity surfaces, face recognition can be performed dynamically

rather than statically from a video input. As shown in Figure 1, when a face is detected and

tracked in an input video stream, one obtains the object

trajectory of the face in the feature space. Also, its projection

onto each of the identity surfaces with the same pose

information and temporal order forms a model trajectory of the

specific face class. It can be regarded as the ideal trajectory of

this face class encoded by the same spatio-temporal information (pose

information and temporal order from the video sequence) as the tracked

face. Then face recognition can be carried out by matching the object

trajectory with a set of model trajectories. Compared to face

recognition on static images, this approach can be more reliable and

accurate. For example, it is difficult to decide whether the pattern X

in Figure 1 belongs to subject A

or B for a single pattern. However, if we know that X is tracked along

the object trajectory (red curve), it is more likely to be subject A

than B [3].

3. Constructing Identity Surfaces

If sufficient patterns of a face class in different views are available,

the identity surface of this face class can be constructed precisely. However,

we do not presume such a strict condition. In this work, we develop a method

to synthesise the identity surface of a face class from a small sample

of face patterns which sparsely cover the view sphere. These face patterns

can be conveniently acquired, for example, by recording a small video sequence

of a subject to be recognised. The basic idea is to approximate the identity

surface using a set of Np planes separated by a number of Nv predefined

views. The problem can be finally defined as a quadratic optimisation problem

which can be solved using the interior point method.

|

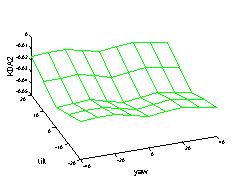

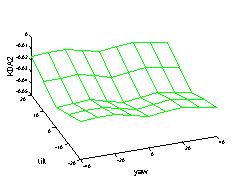

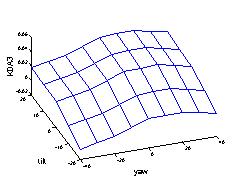

Figure 2: The identity surface

synthesised from 15 prototype patterns. Only its projections in the

first three dimensions of the discriminating feature are shown

here. The two basis axes are pose in tilt and yaw, and the vertical

axis is the discriminating feature.

Figure 2 shows the synthesised

identity surface from only 15 views. A ten-dimensional discriminating

feature vector is used in this example. For clarity, only the first

three dimensions is shown here.

4. Dynamic Face Recognition

We demonstrate the performance of

this approach on a small scale multi-view face recognition

problem. Twelve sequences, each from a set of 12 subjects, were used

as training sequences to construct the identity surfaces. The number

of frames contained in each sequence varies from 40 to 140. A

multi-view face model [2] was

fitted on these sequences to obtain the normalised facial texture

patterns. Kernel Discriminant Analysis (KDA) [1] was adopted to extract the

discriminating features. The identity surfaces were then constructed

from these KDA features and their corresponding pose. Then

recognition was performed on new test sequences of these subjects.

Also, the same face model and KDA were applied. Figure 3 shows the sample images fitted by

the multi-view face model and the normalised facial texture patterns

from a test sequence.

|

Figure 3: Sample frames, fitted 3D shape

patterns, and the normalised facial texture patterns from a test sequence.

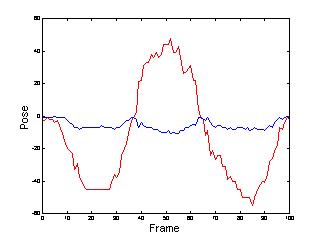

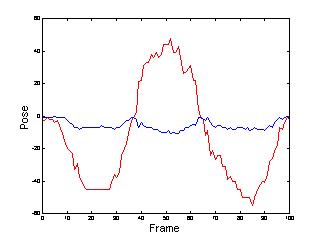

Figure 4 shows the estimated pose in

tilt and yaw.

|

Figure 4: Pose in tilt (blue) and yaw

(red).

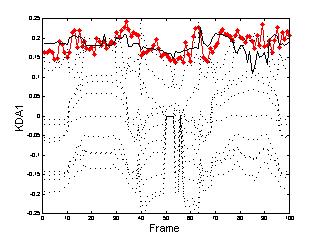

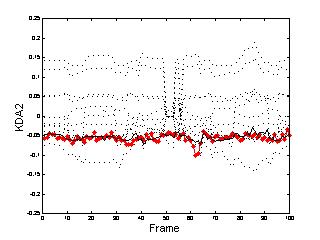

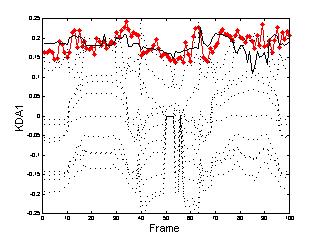

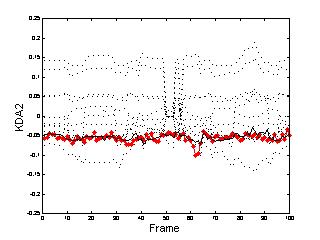

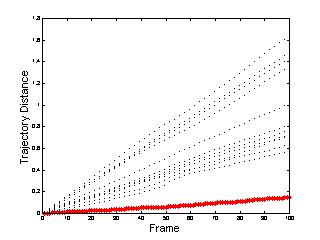

Figure 5 shows the object and

model trajectories in the first two dimension of the discriminating feature.

|

Figure 5: The object trajectory (red) and

model trajectories in the first KDA dimension (solid line for the one from

the ground-truth subject).

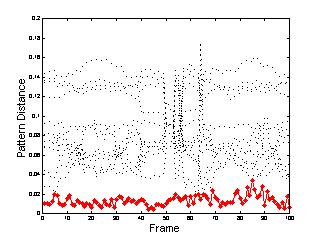

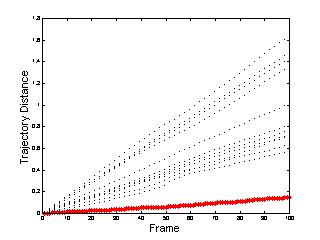

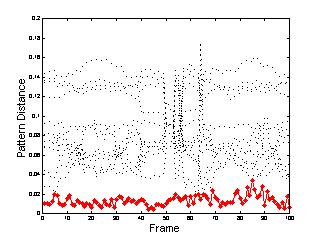

Figure 6 demonstrates the

performance of dynamic face recognition using the trajectory distance

with comparison to that of static face recognition using pattern

distance on individual frames. The pattern distance is computed

between an object pattern and its corresponding point on each of the

identity surfaces, while the trajectory distance is the summation of

the pattern distance over time. It is noted that the trajectory

distance provides a more reliable performance, especially its

accumulated effects over time.

|

Figure 6: Recognition results using trajectory

distance (right) with comparison to those using pattern distance from individual

frames (left).

Yongmin Li 2001-10-14