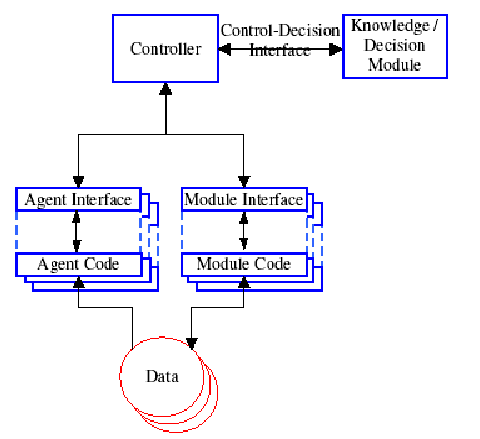

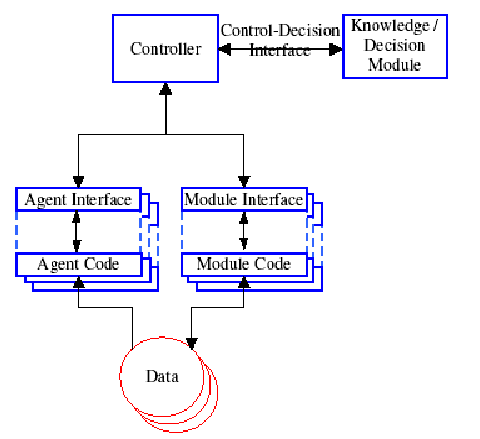

UEDIN developed an intelligent system controller, based on a performance model of each module. Each module is characterised by how its result quantity, result quality and computation cost varies as a function of different parameter values. These characterisations are embedded in an inducted rule structure and a neural network, either of which can be used to predict the effects of parameter changes. The system controller uses these models of individual modules to attempt to globally optimise the whole system's output result quality, quantity or rate.

Central to the integration was each module being described using XML auto-description,

which allowed plug-and-play module integration (at least at the level of the data and control

interfaces, however obtaining consistent semantics of the data did need some integration effort).

An example of a autodescription for a camera module follows:

<description>

<parameters count="1">

<parameter name="ThetaAz" type="float" optional="no">

<description>Azimuth for the camera

</description>

<range from="0" to="360"/>

<default>0</default>

</parameter>

</parameters>

<dataflow>

<inputs count="1">

<input frame="-1" dataset="FoveationPoint" />

</inputs>

<outputs count="1">

<output dataset="RawImage">

<variable name="Time" type="Time" />

<variable name="Image" type="TBitmapByte" />

</output>

</outputs>

</dataflow>

</description>

The controller executed modules that had all data available according to a

knowledge base, that predicted optimal performance. The predictions also

were used to estimate optimal parameter settings. The prediction process

used soem specialised agents that interrogated the current state. A view of the

controller structure is:

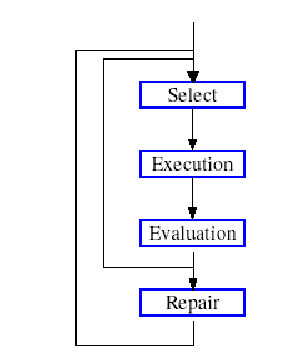

The main control loop consisted of selecting a module to execute, evaluating its

performance and then possibly adjusting some parameters and rerunning the module:

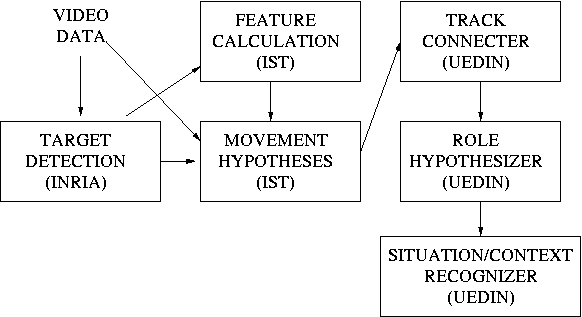

UEDIN led the integration effort connecting:

INRIA target detection module, UEDIN track formation module,

IST dense and sparse feature detection modules, IST

movement classification module, UEDIN rule based and statistical

behaviour recognition modules and UEDIN statistics module.

A flow diagram of the integrated system follows:

In this architecture, we experimented with recognizing behaviours in both the

INRIA lobby and Lisbon shopping centre sequences.

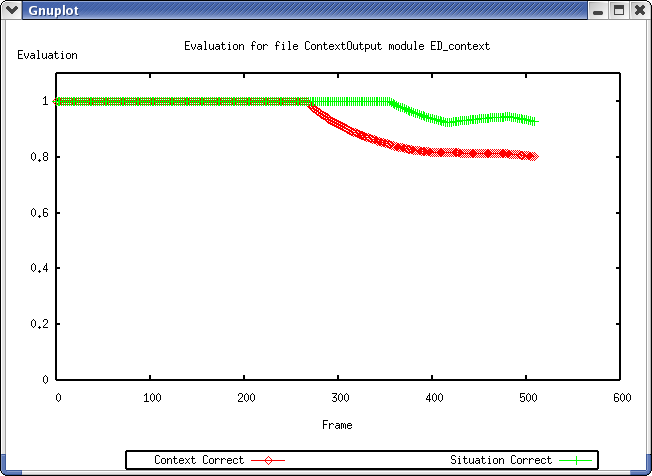

An example of the recognition results for one of the Lisbon shopping centre sequences is shown here.

This graph shows the performance of the situation and context classification modules

in comparison to the ground truth as a function of the frame number. It shows good

recognition of multiple people until about frame 300 where one person does a sequence of

activities that are not modeled (browsing in and out of the store).

Some papers that describe the architecture are:

Back to CAVIAR home page.