Research

Principles of Neural Circuit Computation

The overarching goal of research in our group is to understand 1) how the brain transforms the sensory signals it receives into distributed and computationally efficient neural representations, and 2) how these internal representations are subsequently manipulated and combined to perform the powerful and flexible computations which underlie perceptual decision making and other cognitive functions. To address this challenge, we combine a number of complementary approaches to investigate both the computations performed by the brain and the circuit mechanisms which give rise to them.

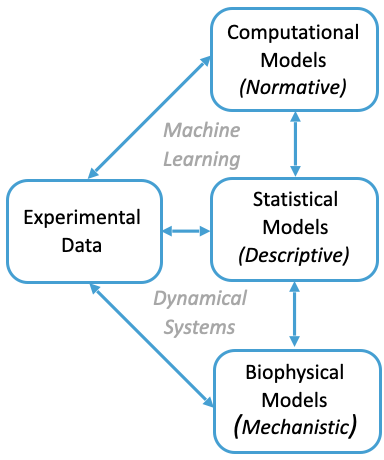

Computations in the brain emerge through the collective dynamics of interconnected networks of neurons driven by time-varying signals from the environment. As such, we make extensive use of dynamical systems theory, both to build mechanistic models of neural circuits and to develop statistical models to infer the dynamical processes underlying activity measured from large-scale neural recordings. On the other hand, we build normative models of neural computations by asking how a given perceptual task could be solved optimally. By integrating these “bottom-up”, “data-modelling”, and “top-down” approaches, we aim to arrive at complete theories of how perceptual and cognitive functions emerge in the brain which are grounded in experimental data.

Learning and Attention in Visual Cortex

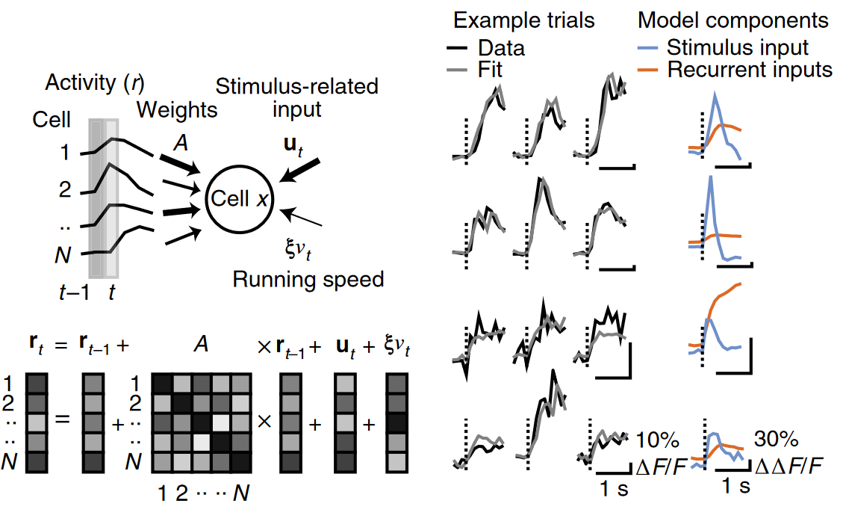

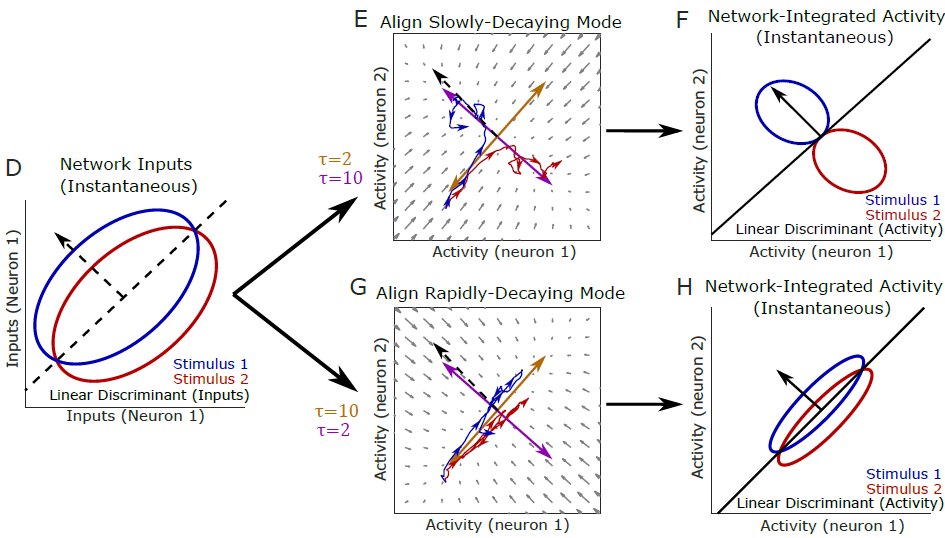

Cortical circuits adapt processing of incoming sensory information with experience and according to behavioural context. These changes in sensory processing occur over multiple time scales, with learning changing cortical representations over periods of days, weeks and years and attention rapidly modulating cortical activity on a moment-to-moment basis. We aim to understand how changes in cortical representations and dynamics emerge over multiple time scales by 1) developing and applying methods for analysis of large scale cortical activity during perceptual behaviours 2) developing mathematical models of neural circuits to understand how changes in connectivity and top-down inputs can improve integration of transmission of sensory information.

We have developed a method to infer neural circuit dynamics from experimentally recorded activity patterns based on MultiVariate AutoRegressive (MVAR) linear dynamical system modelling and applied this method to uncover changes in functional interactions between excitatory and inhibitory cell types as mice learn to discriminate visual patterns (Khan*, Poort*, Chadwick* et al., Nat. Neurosci. 2018).

More recently, we have extended this analysis to investigate changes with attention (Poort et al., Neuron 2022) and developed a theoretical framework to explain how changes in recurrent circuit dynamics over learning can explain improvements in representations for task-relevant stimuli (Chadwick et al., Neuron 2022). Future projects will investigate how learning and attention reorganise the dynamical coordination of multiple brain areas involved distinct aspects of a perceptual task.

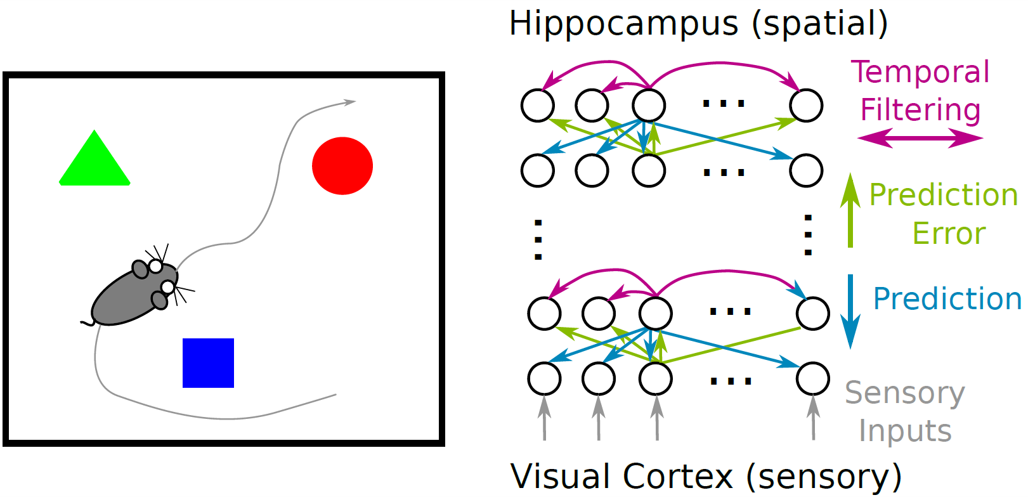

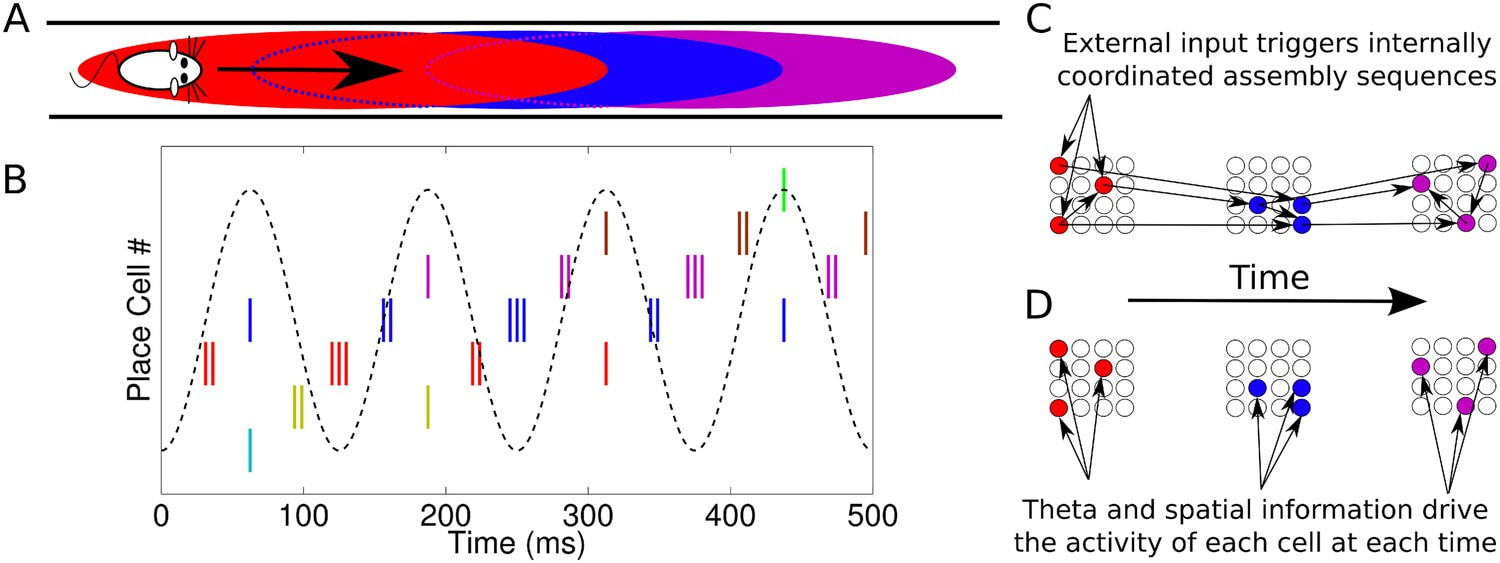

Representation of Space in the Hippocampus and Entorhinal Cortex

Neural circuits in the hippocampus and entorhinal cortex are involved in spatial memory and navigation. We are interested in the population-level codes at play in these circuits, including how fine-scale temporal aspects of the neural code are coordinated across neurons how such coordination influences the capacity to represent mulitple unique spatial experiences (see Chadwick et al., 2015, 2016, eLife). Future projects will investigate whether these fine-scale temporal activity patterns can be explained as emerging from probabilistic inference of self-location based on noisy visual and motor signals.

Models for Visuo-Spatial Processing

Visual perception is fundamentally organised by space, and accurate perception of the spatial organisation of a our environment is crucial for flexible and adaptive behaviour. We aim to develop theories for how visual, spatial, and motor signals are integrated in the brain to solve this task. In one project, we will investigate how spatial signals influence processing of incoming visual information using hierarchical neural network models and analysis of data from mouse virtual reality experiments.