Capacity is a very important characteristic of Hopfield Network learning algorithms. There are a number of different ways of calculating capacity; the suitability of each depends on the nature of the learning algorithm.

Replica theoretic, statistical mechanics approaches can be used for Hebbian algorithms or the pseudo inverse method. This approach has been taken by many researchers. Another approach which is in common use is a `signal to noise' type of probabilistic analysis. Often that is done in a heuristic fashion, although correct probabilistic arguments have been obtained.

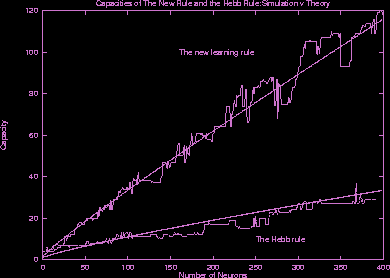

For the new learning methods which I have developed the replica approach is not feasible. Unfortunately a proper probabilistic analysis using the signal to noise approach cannot be attempted in usual ways. Another route is followed. Using various bounding theorems, and graphical representation and manipulation, the capacity of the new learning rule is found to be much greater than that of the Hebb rule.