Ultrasound work

I have been involved with research using ultrasound imaging since 2010. This has mainly been on using ultrasound as an articulography technique - to record the movements of the tongue inside the mouth as a person talks. Here is an example of one aspect of this research, namely developing a robust method to track where the tongue is in an ultrasound video sequence automatically.

This video shows an example ultrasound recording of a child saying "k... aka... iki... oko". The grainy greyscale image is the ultrasound recording. The overlaid magenta contour show the results of the algorithm I developed to track the location of the tongue.

Robust Automatic Tongue tracking

I started this work during the Ultrax project, and it comprised a major part of the research for that 3-year project. That project has now finished, but further developments and refinements and still on-going.

The Ultrax project was undertaken to address the need for visual feedback of mouth movements during speech, which could then be used in the provision of speech therapy for children.

Roughly 1 in 15 UK children suffer from a Speech Sound Disorder (SSD), whereby a person has difficulty pronouncing one or more of the speech sounds of their native language. SSDs make it more difficult to communicate with others and can lead to isolation. Providing realtime visual feedback of a user's tongue movements would be a simple yet powerful remediation aid in speech therapy. Being able to show the child their own efforts compared to the required articulation avoids the need for descriptions using complex language.

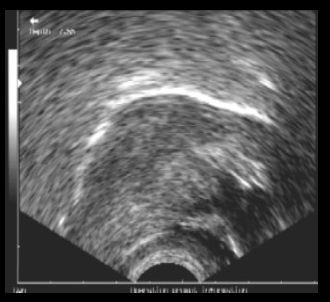

By placing a simple B-mode ultrasound scanning probe under the chin, it is possible to observe some of the tongue contour. For example, here's a standard scan showing what the tongue typically looks like:

But there are several problems with using raw ultrasound video:

- the image is grainy, with a lot of noise

- the tongue contour is non-continuous and often breaks up

- the tongue tip may not be visible

- multiple extra high-contrast edges and other visual clutter are present, which can become very confusing

- crucially, the passive articulators (roof of mouth, teeth) are not visible

- there is not even an indication of which way the head is facing!

Therefore, the aim of the Ultrax project is to use ultrasound to estimate the position/configuration of the tongue at each time step, but to display to the user a full diagram of a vocal tract that it animated in real time rather than the raw ultrasound data directly. This animated diagrammatic display can avoid all the above mentioned problems inherent in raw ultrasound video.

As a first step, an algorithm is needed to track the tongue location in the raw ultrasound video. A few algorithms exist, the most well-known of which is probably EdgeTrak. However, this is a pretty hard problem, and no existing method was truly automatic, needing instead human intervention both to initialise the algorithm and to step in when tracking goes astray. In addition, existing methods run too slowly, often many times slower than real-time, which means they cannot be used to provide online visual feedback. And so, the video above shows an example of an algorithm I developed using a combination of signal processing and machine learning techniques to track the tongue contour in realtime and completely automatically.